After my first two weeks of whole group online teaching this term, I published this post about my experience of adapting to this way of teaching (behind the curve because we didn’t do any whole group teaching on our course last term, only short small group tutorials, which I mentioned briefly in my post about our experience of throwing an EAP course online at short notice). Another two weeks have passed so here is the next instalment! (It’s ok, we only have 6 teaching weeks this term before the final 3 weeks become all about assessment, so there will only be 3 of these posts in total!)

Week 3

The theme for this week was “Overpopulation – myth or problem?”. Having established in Week 2 that I can do break-out rooms (woo!), I decided to try a speaking-focused lesson with a focus on paraphrasing and summarising sources when speaking (which they will need to do for their Coursework 2 presentations). In preparation for the lesson, students had to find a source to support the position they had been assigned (half the class were assigned ‘myth’, half were assigned ‘problem’). In total, there were 4 break-out room groups, of which the final one was the main discussion task. The first 3 tasks involved random groupings, while the main task I did customised groupings because groups had to have a balance of “myth” and “problem” viewpoints and had to take into account attendance patterns thus far (i.e. I wanted to make sure that as well as being balanced viewpoint wise, no group had more than one student with patchy attendance!)

This was the first task (yes, somehow I forgot about “A”…! Students didn’t say anything about it, if they noticed. Of course they may have thought the chat box warmer task was “A”!)

This task reviews the skills learners developed and were tested on in Coursework 1 Source Report. In all the breakout room tasks for this lesson, I included times on the slides to give students an indication of how long they would have in their breakout room to complete the task.

Positive of this task: clear and achievable for students; provided opportunity for speaking/warming up their working in a breakout room mode!

Problem with this task: no tangible output = room for students to slack off. In future I would do something like get groups to report back in the main room, answering questions such as “In your group, whose source was the most current? What different search methods did your group discuss?”

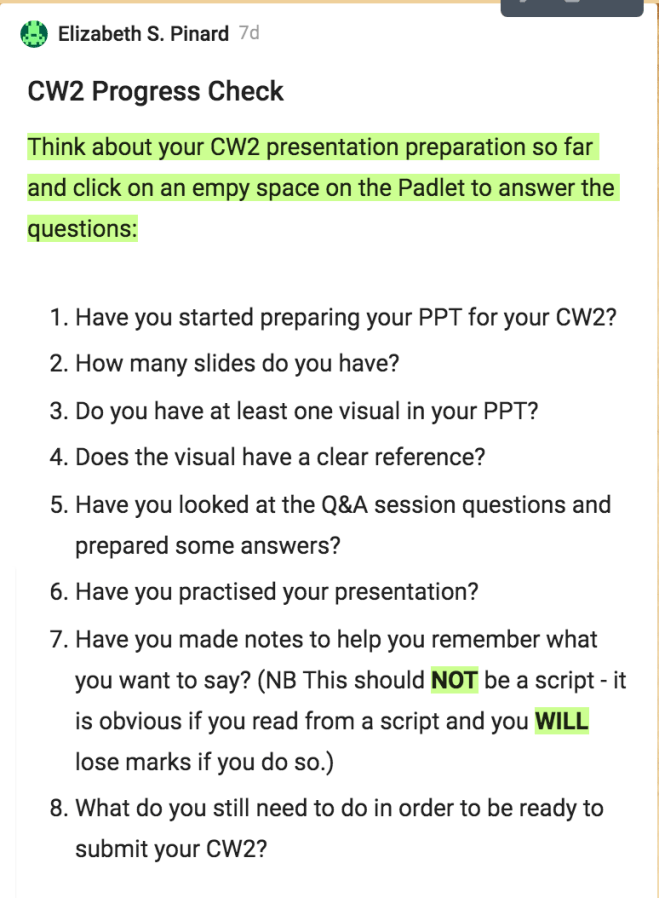

This was primarily a preparatory task for the main discussion but also paraphrasing skill practice. As well as review and practise of written paraphrasing, it encouraged students to pick out key arguments that they could use in the main discussion task. By now, students are used to using Padlet in our whole group sessions both with and without the breakout room/group component.

Positives of this task: useful skill practice, a preparation step for the main discussion, has a tangible/monitorable output (student posts on the padlet)

Problems with this task: my instructions weren’t clear enough – in hindsight I should have included an example post on the padlet!; it took even longer than I had anticipated, which probably also relates to the instructions not being clear enough (fortunately, as has been mentioned previously, timing is very flexible in these sessions this term!); I used the comment function on Padlet to give live feedback/guide students but not all groups noticed the comments as they are not as immediately visually evident as the equivalent on a Google doc would be (I dealt with this by going into breakout rooms and drawing students’ attention to the comments!); my post-task feedback again needed more thought (work in progress!).

This was the final preparation task before the main discussion task. The goal was to give students time to consider the arguments linked to the alternative viewpoint and possible responses to these, so that the main task discussion could be of a higher quality.

Positives of this task: It used the output of the previous task (the arguments on the padlet) with a focus to how they would be used in the subsequent task, which adds coherence to the lesson arc and hopefully means students can see why they are doing what they are doing – there is a clear direction to the tasks;

Problems with this task: students could think “I’ll manage with the discussion, I don’t need to do this task”; any given student’s experience of this task would vary depending on how forthcoming or not their group-mates were. Group dynamics in the online setting is something I need to think about more – how to help students to work well together in groups, in breakout rooms. Maybe add more structure to breakout room tasks e.g. start them with some kind of mini-activity where students have to write something in the chat box, before moving onto using the audio and doing the actual task at hand.

(No, I don’t know what happened to my grasp of the alphabet in these lesson materials! I think I was so focused on the task content that I forgot to pay attention to numbering/lettering!)

So, the main task! Group discussion requiring use of the sources found for homework (research skills), the key arguments identified, paraphrased and considered in the course of this lesson and language for referring to sources verbally.

Positives of this task: Brings together everything the students have done from homework through to final discussion preparation

Problems with this task: As far as I was able to tell, only one out 4 groups did the task properly! I think again what was missing was a clear feedback stage which students would be made aware of in advance of starting the task and which required them to DO the task fully in order to complete; students who want to do the task properly but are in a group with students who are more interested in slacking off lose out (had one student who when I was in the breakout room monitoring/checking on them, tried to give her opinion and elicit others’ opinions but radio silence followed!).

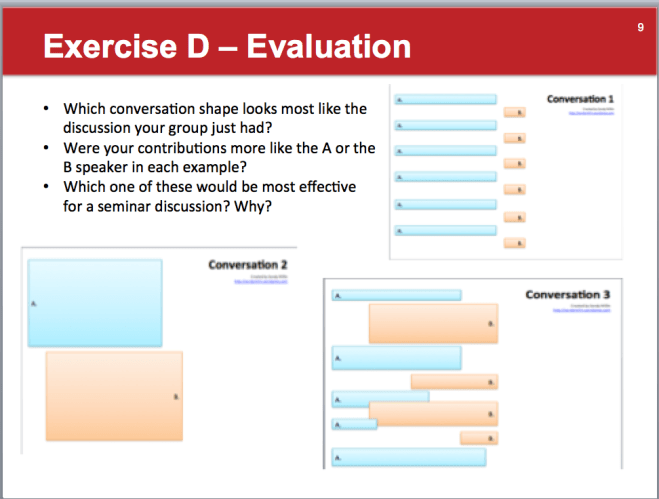

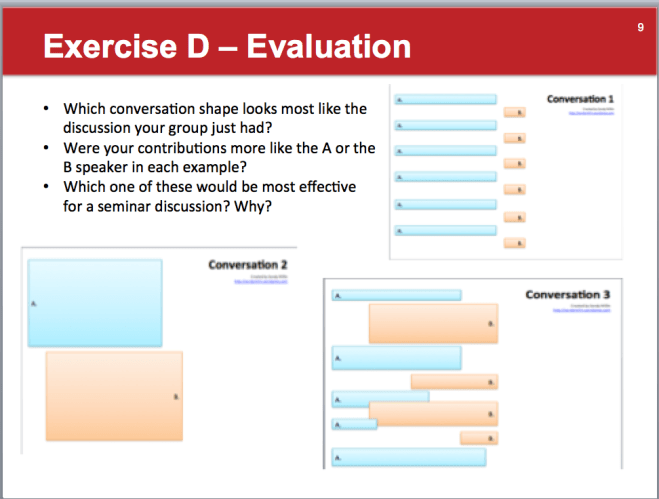

This evaluative element of the lesson comes from Sandy’s recent blog post about conversation shapes. (Although it might be hard to see in this screenshot of the slide, depending on the resolution of your screen, when displayed as a pdf of a ppt in Blackboard collaborate, the credits were clearly visible!) Unsurprisingly, for the group who did have their discussion, it looked most like conversation 2. As a class, we identified that conversation 3 would be most effective – contributions of varying length, responding to the other speakers’ contributions, building on other speakers’ contributions. Obviously in groups, there would be more than 2 speakers but the students didn’t seem to have any problems applying the visuals to a group discussion.

Positives about this task: It was great to have a visual way to think about the discussions the students had had (those who had had them!! But I figure for those who bothered less, this was still useful and could be considered in terms of previous discussions). Having identified that 3 would be the most effective, this can be revisited in future speaking lessons as a prompt in advance of discussion tasks. Could also consider what language and cues would help to build a discussion like this e.g. agreeing and disagreeing language that allows connection to what has been said (that’s a good point, but…/yes, I completely agree, also…), back-channeling etc.

Problems with this task: I probably didn’t go far enough with it. Although, possibly this is not a problem but rather a slow-burn thing that bears plenty of revisiting and therefore doesn’t require lengthy input around it straight away. I think in future I will introduce this after the first suitable seminar discussion practice that students do in the course and revisit it and build on it regularly e.g. have example discussions to match to each shape, the language input as mentioned above etc. (Thank you, Sandy!)

The final task of the lesson was a reflective task, with the output going onto a padlet. Reflection is a key component of learning, of course, and actually these students by and large did a good job of this. This is something I need to capitalise on more in future lessons.

Positives of this task: made students think about what they’d done and evaluate it; those who didn’t speak recognised it in their answers (it’s something!);

Problems with this task: Too many closed questions – need to push them further than that, closed questions are fine but then a follow-up question could be good.

This task reflected weekly lesson content for week 3. In practice, the students had very little in-class time to start it, because all the teacher-led tasks (as above) took a fair amount of time to do, but students are accustomed to fairly substantial homework tasks and as this was part of Lesson 3CD also factors into their asynchronous learning time.

Overall, Week 3 was a useful learning curve for me. There were plenty of positives, there are plenty of things to work on. I find it really useful to consider each lesson in these terms, think about what went well, what didn’t work and how you’d do it differently next time to make it work better, and think about how to reflect what you’ve learned more immediately in subsequent lessons – I guess that is what reflective teaching and learning is all about!

Week 4

Well…you know those lessons where you think you’ve made a really quite good lesson plan and have high hopes for how the lesson will go, but the reality turns out… rather differently? That was week 4’s lesson for me. The theme for Week 4 was Scientific Controversy. The asynch materials included a listening practice based on a panel discussion about genetic modification, which I asked the students in advance of the class as preparation. Though it was homework, it wasn’t extra in the sense that it was part of the core asynch materials for the week.

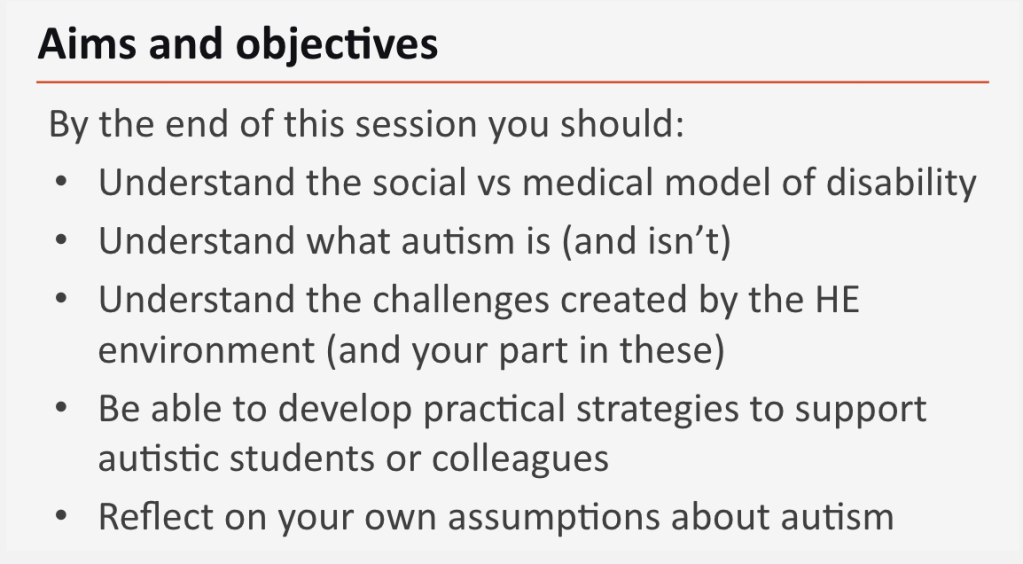

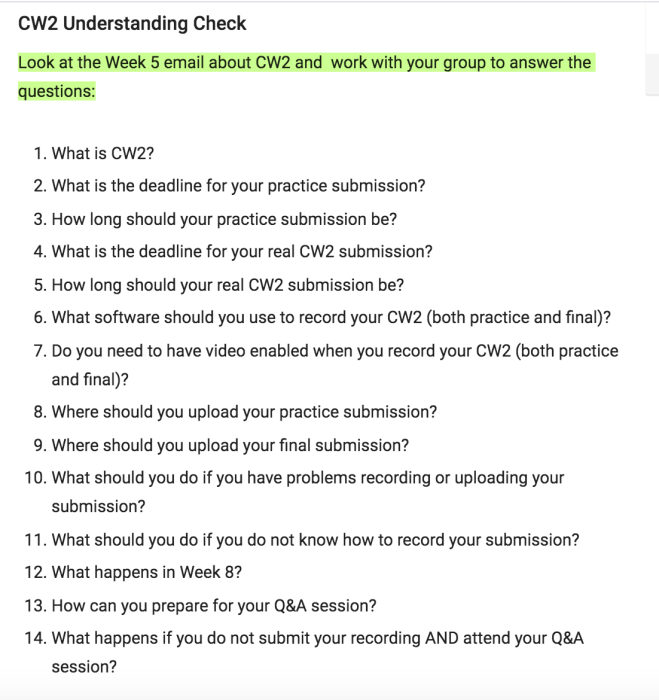

I began the lesson in the usual way – with a chat box warmer. Today I asked them to pick one adjective that most describes them right now and write it in the chat box. 9/14 responded – tired, exhausted, sleepy, blue, sleepy, energetic, sleepy too, calm, hungry. I acknowledged and responded to all their responses. Then we looked at the lesson objectives. In this lesson, I put extra effort into making sure the lesson objectives were clear and carried through the lesson, so that students could see where they were in relation to the objectives, see progress being made and see how tasks relate to the lesson objectives (I’d read, or watched, I forget which, about the importance of doing this). I did this by repeating the objectives slide at appropriate intervals, highlighting each objective as it was focused on and putting a tick by each objective as it was met. Here is an example:

The first stage of the lesson was a language review stage.

This stage included a definition check for controversy and scientific controversy and a series of pictures of example scientific controversies for which students had to guess what scientific controversy was being illustrated. Here is an example:

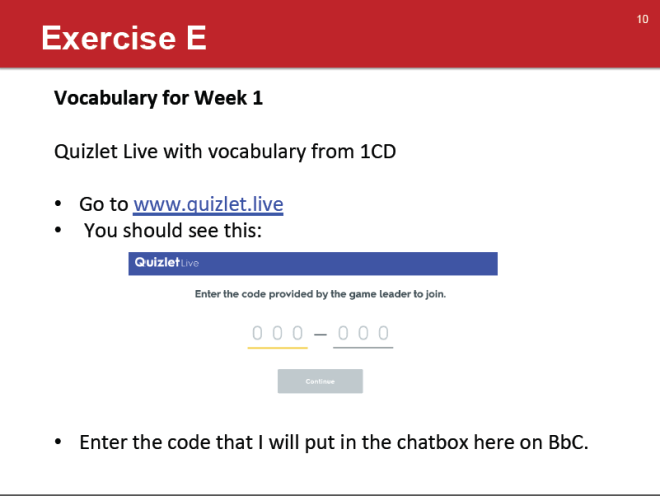

The students responded, and a good pace was maintained. I could perhaps have done more with the second question, tried to get students to share more ideas, but knowing I had some meatier tasks later in the lesson, I didn’t want to spend too long on this one. The final task of the first stage was a quick Quizlet review of some vocabulary from the homework asynch materials. 11/14 did it, which was an improvement on Week 2! I haven’t tried the team/breakout room version yet – that may be for next week!

Positives for this stage: Pacing, student response, topic and activities connected to asynch materials so provide review opportunities, use of pictures.

Problems with this stage: The second question on the picture slides got neglected. I think when it unfolding, I worried that if I pushed the second question, the amount of time they spent typing would negatively affect the pace/mean too long was spent on the activity.

The next stage of the lesson was reviewing the listening homework.

I started with these questions:

As you can see, I messed up the formatting for this slide so the Write yes or no looks like it only relates to question 3. I corrected it verbally but only got ‘no’s’, for those who responded. Hoping this was for the third question, I reminded them about the online mock exams available, the importance of practice and that that there would be opportunity for practice during this lesson too.

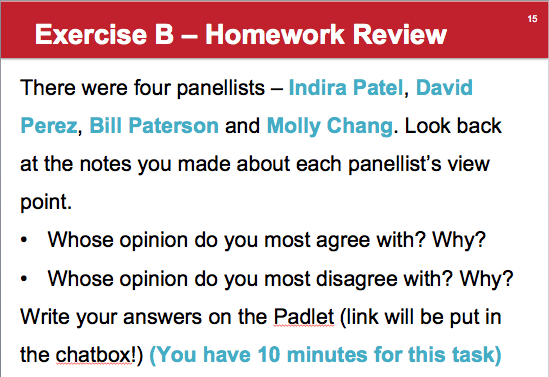

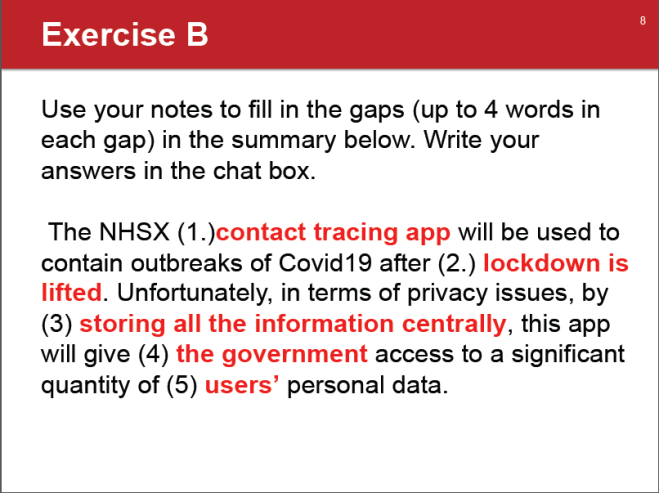

This next task was supposed to be a fairly quick and easy way of getting them to show their understanding of the opinions voiced in the panel discussion:

Nobody did it. Nobody responded when I asked why nobody had started doing anything a few minutes later. Eventually I said ok give me a smile emoji if you did the listening homework and a sad face emoji if you didn’t. I only got sad faces. So this task flopped completely. The next one was also not going to be possible as it reviewed the target language from the aforementioned homework:

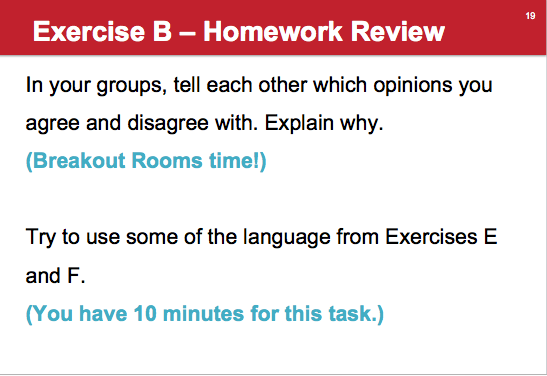

So I skipped to the point where I displayed the target language and we related it to the conversation shapes we’d look at in Week 3 and then moved on to the final review task:

(The opinions referred to are those of the panel speakers again.) Obviously this needed a workaround due to the lack of homework issue, so I had them open up the relevant powerpoint which had notes relating to each panellist’s views and got them to tell me via the chatbox when they had done so.

Positives about this stage: It had a mixture of chatbox and breakout room activities, and focused on the content and the language of the listening homework. I had some workarounds for lack of homework.

Problems with this stage: It relied on students having done the homework! The padlet task had no work around (I was working on the basis that at least SOME of them would have done it and be able to post on the padlet and the rest could interact with that using the comments) for the zero homework completion.

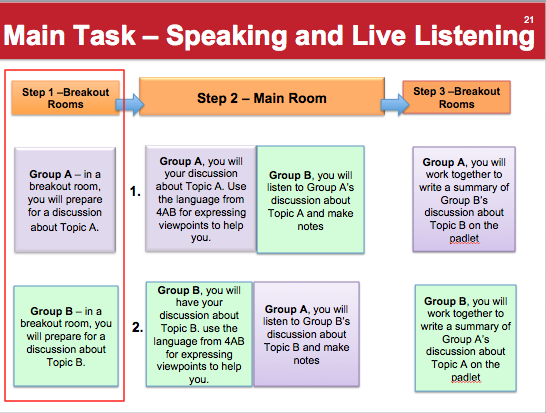

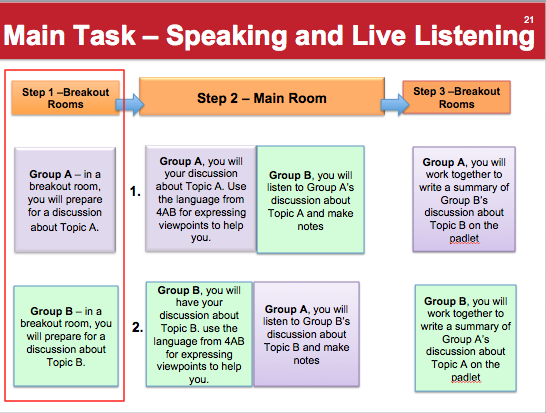

The next and final stage of the lesson was the speaking/live listening stage:

I made this slide a) to give students an overview of this stage of the lesson and b) to insert at the relevant intervals to show which phase of the task we were moving on to. More detailed instructions for each step came at the start of each step. I had hoped this overview would motivate the students to carry out each step as they would know the following steps relied on it and have a clear picture of what they were working towards.

In practice, I put the students into breakout rooms, having set up the task, and went in to each room to check on the students. Group A gave me radio silence. No response. No audio, nothing in the chatbox, whatever I said. So I reiterated what they needed to do and said I would be back in 10 minutes to check on them (the preparation stage was 20 minutes). Group B had some students who did engage and some who did radio silence. Thank God for the ones who did! They asked questions about their topic, I checked their understanding of the task and then I left them to it for a bit (again promising to return in 10 minutes to check on them). At the relevant point I went back to Group A, knowing full well that the chances of them having done anything since I left (no activated mics had appeared at any point) were slim (they could have used the chatbox…they hadn’t!). I tried again, more radio silence. Group B, again, had made progress when I went in to check on them. Then I brought everyone back to the main room. Except…most of Group A didn’t appear/reconnect. (So, presumably, they had done the log on and bugger off thing!) Obviously the plan in the slide above was a write-off (the members of Group A that did show up were still radio silence when addressed/instructed!). In the event, Group B did their discussion and I gave them some feedback, again referring to conversation shapes.

Positives of this stage: It was clearly staged. The group that did the parts that they were able to do made a good effort. (I feel for them, being so outnumbered by ones who won’t participate…)

Problems with this stage: It relied on student participation! Step 3 relied on Step 2 being carried out to some degree of success. Too ambitious? But these ARE pre-masters students, it shouldn’t be! There again, they are all knackered (see chatbox warmer – though Mr Energetic? Group A. Just saying.) If the stage had worked as planned, students may have struggled to summarise the other group’s discussion because poor audio quality makes it harder to follow what is being said.

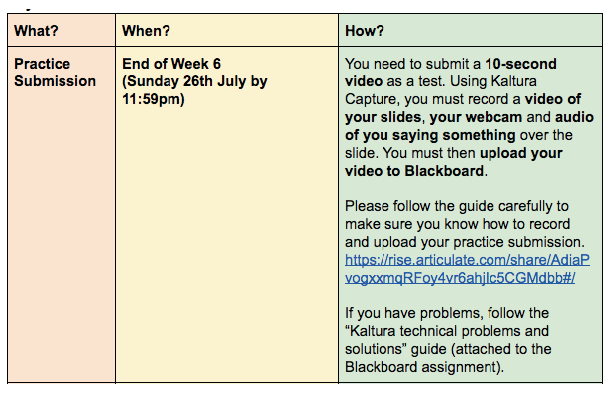

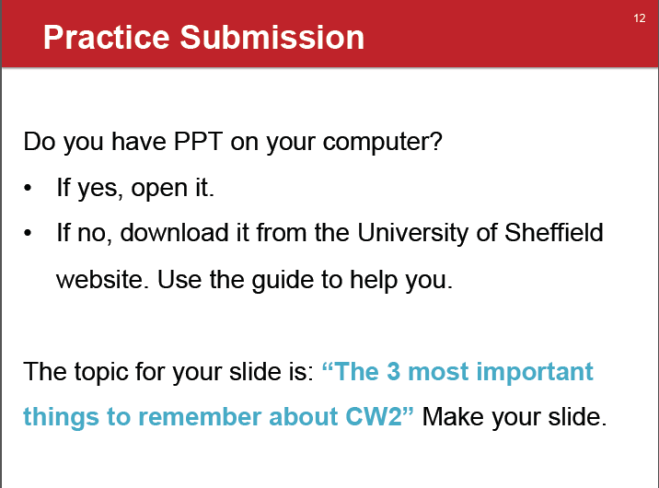

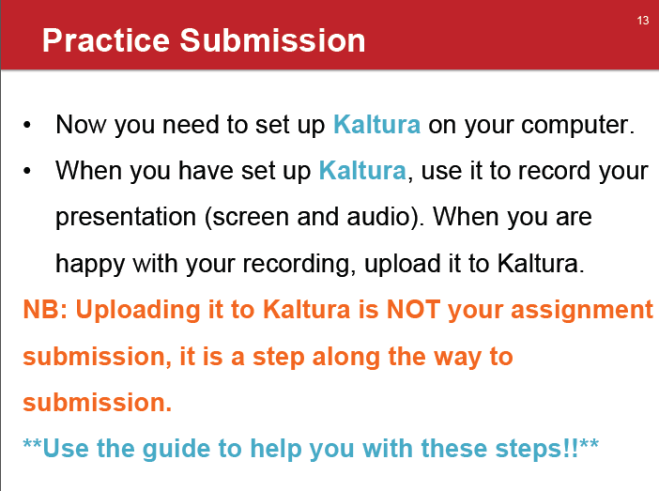

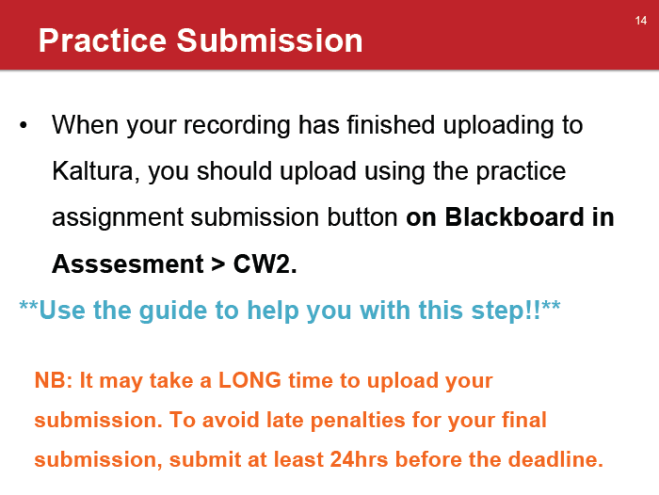

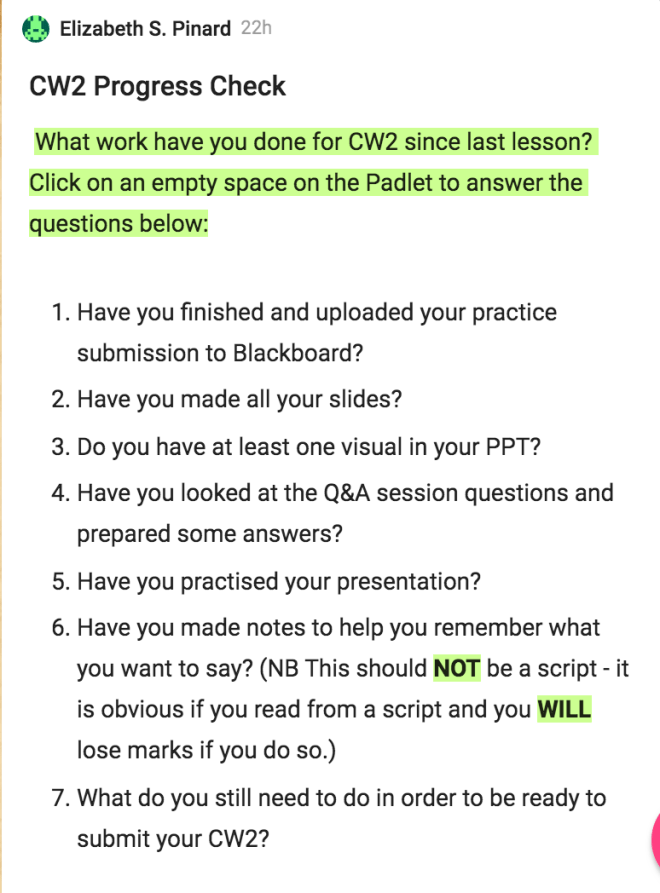

What am I taking away from these 2 weeks? That I want an article/book/video about classroom management with online platforms! Though quite what can be done if students are completely unresponsive, I’m not sure. I have worked really hard on making everything as clear and as meaningful as possible, in terms of tasks and objectives, which I am pleased with. I continue to try different task types and see what does and doesn’t work (with this group). Possibly I approached it wrongly overall – I tried to connect to the asynchronous material and give students engaging tasks that would help them develop their academic skills and prepare for exams, but maybe I should have focused more on their coursework. The next and final big thing students have to do in terms of course work is prepare and submit a presentation recording, so my final 2 lessons will focus on that! I can but do my best. Importantly, I seem much better able to accept things going wrong, take what I can from it and not beat myself up over it than I have been in the past. I think this links with having had a really supportive line manager/programme leader for a year now – work-related anxiety levels are a lot lower than they used to be – and also, of course, that it has been 1.5yrs now of using Mindfulness to cope better with life, including work.

Watch this space to find out what happens in the last instalment of my teaching reflections for this term. The main purpose of these posts is to be my memory, outsourced, when I come to planning lessons next term with a new group of students! Space and time will make it easier to incorporate what I have been learning these last 4 weeks (lots of learning, hard to keep up but I am doing my best!). The course will look a bit different, and is still under construction, but since it will be what it is from the start, rather than a change being thrust on students part way through, there will be a lot more scope for setting clear expectations and instilling good habits etc from the beginning AND the university will have made it so that students can access Google suite from China yayyy (I forget the technical details but it is some kind of VPN they are purchasing that enables it) – so, exciting times ahead!